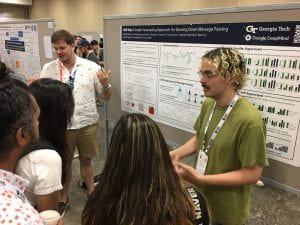

We are excited to present Half-Hop at ICML 2023 in Honolulu, Hawaii!

Half-Hop is a new plug-and-play network augmentation for message passing neural networks. More info and code coming soon!

by Eva Dyer

We are excited to present Half-Hop at ICML 2023 in Honolulu, Hawaii!

Half-Hop is a new plug-and-play network augmentation for message passing neural networks. More info and code coming soon!

by Eva Dyer

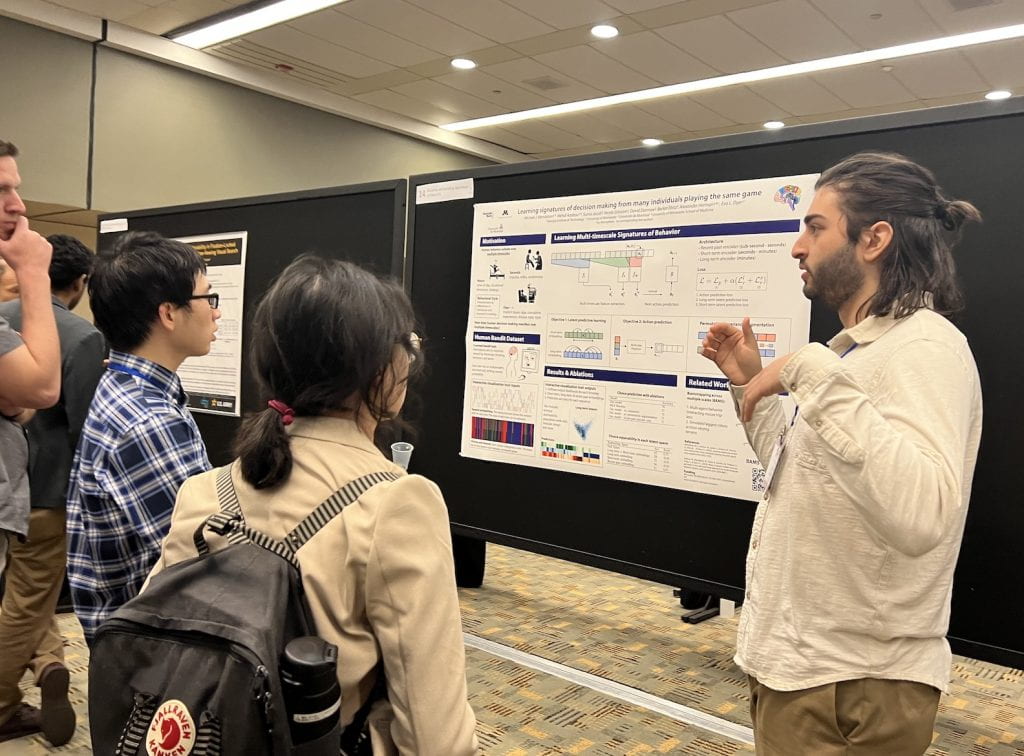

The lab presented two papers at the IEEE Neural Engineering (NER) conference in Baltimore, MD on April 25-27th! Michael had the chance to present his first first-author paper and absolutely crushed it.

Check out the papers here:

M. Mendelson, M. Azabou, S. Jacob, N. Grissom, D.P. Darrow, B. Ebitz, A. Herman, E.L. Dyer: Learning signatures of decision making from many individuals playing the same game, 11th IEEE EMBS Conference on Neural Engineering (NER’23), April 2023, (Paper)

by Eva Dyer

MAIN 2022 was held in Montreal on Dec 12-13 and brought together researchers across the interface of neuroscience and AI.

Check out Eva’s talk on the challenges of neural decoding and the embedded interaction transformer!

by Eva Dyer

The lab had two papers accepted at NeurIPS this year! We are excited to attend the meeting in New Orleans!

__

EIT – In this work, we introduce a new space-time separable transformer architecture for building representations of dynamics called Embedded Interaction Transformer (EIT). When applied to the activity from populations of neurons where size and ordering may not be consistent across datasets, we show how EIT can be used to unlock across-animal transfer for neural decoders!

__

MTNeuro – In this work, we introduce a new multi-task benchmark for evaluating models of brain structure across multiple spatial scales and at different levels of abstraction. We provide new baseline models and ways to extract representations from 3D high-resolution (~1 um) neuroimaging data spanning many regions of interest with diverse anatomy in the mouse brain.

by Eva Dyer

We are excited to have a new NSF project funded with Vidya Muthukumar, Tom Goldstein (U Maryland), and Mark Davenport on design principles and theory for data augmentation.

Check out our recent preprint that builds a framework for understanding the impact of augmentation on learning and generalization.

by Eva Dyer

Eva wins a NSF CAREER to further accelerate the lab’s study of neural circuits and representation learning from large-scale neural recordings!

Check out more info here:

https://www.bme.gatech.edu/bme/news/eva-dyer-using-nsf-career-award-make-neuron-behavior-connection